- #Fancycache vs supercache drivers

- #Fancycache vs supercache software

- #Fancycache vs supercache trial

I expected a bit more “oomph” right out of the box, especially for the smaller size datasets. In case it is 4k blocksize you indeed could try to increase it a few steps and see if the result gets better.In the previous post, Analytic Workstations – Part I, I touched on my disappointment on the performance of a new workstation that I had just built. When asking for primo-caches "block-size", we doesn't mean how you configured your FW-raids-stripe size, in the controller settings. Does the 4k-rates improve when you re-run the 4k-test a couple of times? Could you check LRU? One thing is you are using LFU-R, that means it does NOT keep recent data (for example bench-data) but keeps the data you most frequently use. If you compare 40mb/s 4k-R/W to a HDD, that for example would still be pretty impressive.

Still I say that whatever you have 40mb/s or 350mb/s 4k-R/W doesn't make any significant difference in real work circumstances in which 4k-RW usually come ahead (less amounts). and maybe the supercache-devs found a workaround or something.

#Fancycache vs supercache drivers

The problem is to think it's primocache's fault only because supercache does not have this issue, doesn't mean it's probably the drivers or the FW-raid-controllers fault.

I also agree with you that with your raid- or primocache-configuration, does not operate well. Whatever, I don't want to start a flameware upon that. It is tot true that these cards are more expensive. It is not true that systems with PCIe-SSD-Card added need significant longer boot-up. YOu do not need any CPU-cycles extra maintaining data on such storage card, thats another benefit. The good thing is, that these cards report to the BIOS/OS as a single simple drive. These cards offer superior sequential performance. PCIe cards are great, because they maintain seperated SATA3-controllers single each ~600mb/s per internal raid0-port, while such raid0-controlling complety is hardcoded into the firmware, often containing a LSI bridge chip. Im just saying single drive SSD will outperform any raid0-SSD array in 4K-disciple, while on the other hand that disciple itself isn't of such a high significance. While 4K-scenarios are a little less significant overall, since you barely know a real-life use case, that takes use of large amount of 4k-R/W. Im just saying that using FW-Raid0, or even HW-Raid, whatever it is based on a SSD-internal-controller (PCIe-Cards) or Hardware-Card-Controllers with SSD-Driver at all, is not what increases 4K. I hope this clarifies a few things on my side. Next to that, all external controllers found on PCI-e cards add to the systems boot time (some as much as 18-20 seconds!).which was a goal in itself to try and achieve short boot times. total getting 480GB storage as a result). Yes, i bought 4 SSD's and this still saves me quite some money compared to a decent PCI-e card (i got these for little over 400 EUR.

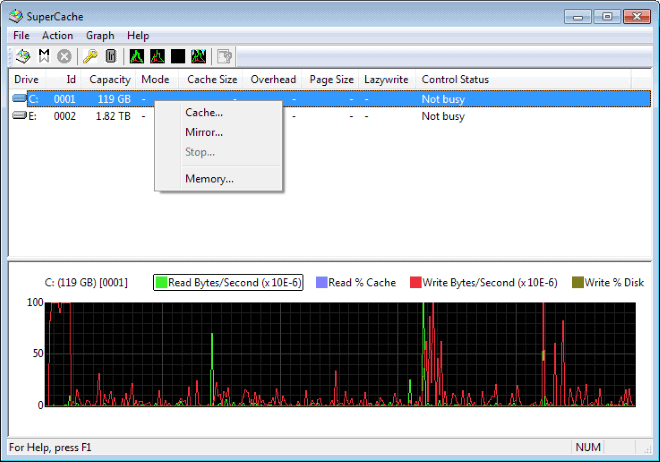

PrimoCache can often outperform SuperCache, which is where it shows its diversity.)Īlso there is a definite reason i did not buy a PCI-e SSD card. So even if this setup would not be most ideal for achieving the highest 4K rates, i still get around 350 MB/sec 4K reads with supercache (and from what i have seen/read so far. I know 4K I/O are very CPU intensive, but i hope we can both agree that for the current mainstream market it doesn't get much better then a 4770K. Yes, i am using the on-board RAID controller (Intel's first using 6 x SATA3 interface.which would imply a lot of overhead before the controller would get saturated.sadly this was not the case). I did not mention the algorythm (my bad), i'm using LFU-R. You seem to be posting some very impressive speeds (even though CDM does not bench with uncompressable data.yet this usually does not color the results for more then 10-15%).īasically all of the information you asked for was in my OP (Cache size = 4096MB, Stripe size = 4K). (I ask, because these are really the ones that matter). Here's some information of the hardware i am using:Ĥ x Sandisk Extreme II 120GB SSD's in RAID0.Īny idea, why PrimoCache seems to be hitting significantly lower 4K Read speeds? I have been using the same type of Cache specs on both pieces of software: 2048MB or 4096MB Cache size.

While, with PrimoCache i seem to be around 34 MB/sec random 4K read speeds at most! With SuperCache i was hitting around 300 MB/sec random 4K read speeds. I have some past experience with using SuperCache as a RAM caching tool, and i noticed that my 4K random read speeds seem to be much much lower then when i was using SuperCache before. I always use AS SSD for benchmarking as it seems to give more accurate results as to what is happening (where CDM tends to color most numbers much more positive.). I see a lot of people on these forums using Crystaldiskmark for posting their results.

#Fancycache vs supercache software

So far i think the software looks good and it seems to be working very nice.

#Fancycache vs supercache trial

I recently started my trial with your PrimoCache software.